EU MDR, FDA 510(k) and NHS DTAC

How Cybersecurity Nonconformities Happen, and How to Recover

Cyber Alchemy × Mantra Systems — Episode 2

This article is published in partnership with Mantra Systems. Cyber Alchemy focuses on cybersecurity, helping teams develop and evidence security for software-enabled and connected medical devices. Mantra Systems specialises in regulatory strategy and clinical evidence for UKCA and EU MDR/IVDR pathways. Together, we’re producing a practical series for MedTech teams: what to build, what to defer, and how to avoid avoidable rework when moving between UK, NHS procurement, and EU routes.

You had a clinical idea that could genuinely help people. Cybersecurity wasn’t part of the plan. That’s understandable. It’s also where things go wrong.

You didn’t start this because you wanted to spend months arguing about patch policies and evidence chains. You started it because you saw something in clinical practice that could be done better, a gap, a risk, a way of helping patients that the current system wasn’t delivering. That’s a good reason to build a medical device. It’s also, if we’re being straight with you, the reason most founding teams arrive at cybersecurity late.

Not because they ignored it. Because they were focused on the thing that actually matters, the clinical problem and cybersecurity felt like an administrative layer to deal with later. Then, later arrived. And it was harder, and more expensive, than anyone had budgeted for.

We’ve seen this pattern enough times that it’s no longer surprising. What still surprises teams is how the frameworks catch you out. It’s rarely “you ignored a rule.” It’s usually something more avoidable than that.

This article covers how it happens, the four patterns we see most often in practice, and what recovery looks like when you’re already in a non-conformity. If you’re approaching an audit with concerns, or you’ve already received findings you need to close, read on.

Key takeaways

- EU MDR and FDA Section 524B share a common accountability logic: you define your own cybersecurity commitments, and those commitments become the standard you’re assessed against. Missing your own stated policy is how most cybersecurity nonconformities happen, not missing some obscure rule you’d never heard of.

- DTAC operates differently, the NHS trust making the procurement decision plays the role of assessor, not an independent regulatory body but the same failure mode applies.

- The four patterns that cause the most pain: security built but never documented, SBOMs (software bill of materials) treated as one-off submission documents, patch policies that couldn’t withstand the realities of clinical deployment, and outsourced development where security evidence wasn’t in the contract.

- Recovery is possible. But it has to be targeted, and the window is tighter than most teams expect. Understanding whether you have a documentation gap or a genuine security control gap changes everything about how you respond.

- Closing the NCR (Nonconformance Report) is necessary. It isn’t the finish line. The artefacts that get you through recovery are also the ones that mean you don’t go back.

Companion perspective (Mantra Systems)

This article focuses on cybersecurity nonconformities, what causes them, what recovery involves, and what to build to prevent recurrence. The companion article from Mantra Systems covers where regulatory and clinical nonconformities typically occur and how to recover from them quickly and effectively.

► Read Mantra Systems’ expert perspective:

► Book a joint Cyber Alchemy × Mantra Systems review

The four patterns we see most often

These are the situations we encounter when teams come to us after receiving findings, or when they are approaching an audit, and something doesn’t feel right. Each pattern is fixable. Each one is also avoidable if you know what to look for.

| 01 | The security that exists in the product, but not on paper Multi-factor authentication is working. Access controls are in place. The developers did solid work. But when a notified body reviewer asks for evidence, or when a potential NHS customer reviews your DTAC submission, the threat model hasn’t been created, the security requirements aren’t defined, and the verification record to evidence that the security requirements have been met isn’t available. It is important to remember that neither a notified body under EU MDR nor the FDA during a 510(k) review will usually generate missing cybersecurity evidence for you by conducting its own independent penetration test of the device. Their role is to assess the conformity or adequacy of the evidence you submit. If key cybersecurity evidence is missing, unclear or not traceable to the version under review, that can lead to questions, delays and, where the gap is material, formal findings |

We’ve worked with companies where we reconstructed the entire evidence chain after the fact: identified the original threat, documented the mitigation rationale, wrote up the security requirement, and produced verification evidence for controls that had been live in production for months. The security was genuinely good. The documentation simply didn’t exist.

Development teams focus on building things that work. That’s their job. The threat modelling → mitigation → security requirement → verification chain that reviewers need to see isn’t a natural output of a development sprint. It has to be deliberately built in parallel, as a separate activity. When teams are moving fast on limited budgets, it’s often the thing that gets quietly deferred.

The regulator only sees what’s in your documentation. If MFA was never identified as a threat response, never specified as a security requirement, never formally verified, it doesn’t exist from a regulatory evidence perspective, even if it’s running perfectly in production.

| 02 | The SBOM that stopped at submission A Software Bill of Materials is submitted as part of the conformity assessment or 510(k) package. It’s accurate at that point. Then the product gets updated, libraries change, new dependencies come in, and the SBOM stays exactly as it was. |

When Log4j was disclosed in late 2021, a critical vulnerability in one of the most widely used software libraries in existence, manufacturers without a maintained SBOM spent weeks in manual investigation trying to work out whether their devices were affected. Those with an automated, release-tied SBOM could answer that question in minutes.

The regulatory consequence of a static SBOM isn’t just a documentation gap. It means your postmarket vulnerability monitoring is compromised. Under both EU MDR and FDA Section 524B, the SBOM obligation applies throughout the product lifecycle, not just at the point of submission.

The fix is a process change more than a documentation effort:

- SBOMs should be generated automatically at each build or release

- SBOMs should be linked to a CVE monitoring workflow

- Triage decisions must be documented: “we assessed this CVE, here is our conclusion and why”

One worked example of detection → assessment → decision → closure is worth more to a reviewer than a perfect SBOM last touched eighteen months ago.

| 03 | The patch policy that couldn’t survive clinical reality A manufacturer writes a patch policy with timelines that look credible. It gets submitted. Then postmarket reality arrives. Medical devices in clinical settings are not consumer apps. |

A critical patch may require a full regression test cycle before deployment because a software update that introduces instability is itself a patient safety risk. Devices may be in air-gapped environments. Hospitals have their own change control processes. High-uptime requirements limit the availability of maintenance windows.

None of this is unusual. All of it is foreseeable. But if the policy says 14 days and the deployment took 45, that’s a finding even when the delay was clinically justified and sensible.

The answer isn’t to write a weaker policy. It’s to write an honest one. Define severity tiers. Map them to how your devices actually get deployed. Include a documented exception process. A realistic policy you can consistently deliver is always more defensible than an impressive one you’ve already missed.

| 04 | The outsourced developer who didn’t know the rules applied A large proportion of early-stage MedTech products are built by external development teams. Development teams build to specification. If security requirements weren’t in the specification, the developers produced exactly what was asked for. No one failed. There’s also no usable regulatory evidence. |

More practically, if the contract didn’t require the developer to produce an SBOM, there may be no mechanism to get one. If security testing wasn’t a contracted deliverable, it may not have happened. Going back to a developer after the product is built to ask for evidence that wasn’t part of the agreement is an expensive conversation with an uncertain outcome.

This is entirely preventable at the contract stage. Security requirements, evidence deliverables, SBOM obligations, vulnerability disclosure procedures, and audit access rights must be in the contract before a single line of code is written. Once the product is built and the relationship has moved on, retrofitting these obligations is slow, costly, and sometimes impossible.

How the frameworks actually catch you

The non-conformity that lands on your desk usually isn’t “you failed to meet a regulatory requirement.” It’s “you told us you’d do X, and you didn’t.”

That distinction is important. It means the exposure often doesn’t come from a gap in your security. It comes from a commitment you made in a document, sometimes months earlier, possibly written to sound credible rather than to reflect operational reality that you then couldn’t keep.

Most compliance frameworks work this way.

| Put Simply |

| Think of a building inspection. The inspector doesn’t just check the building against a universal rulebook. They check it against the plans you submitted. If your plans said the fire door would be at the end of the corridor, but it isn’t, that’s a finding, even if the building is otherwise safe. In MedTech cybersecurity, your QMS documents and your submission materials are your plans. Whatever you wrote in them is what you’re measured against. Write something ambitious that you can’t deliver, and you’ve created the non-conformity yourself before the auditor arrives. |

EU MDR: “timely” is the only number you’ll find

EU MDR does not prescribe specific patch timelines. The approach is deliberately non-prescriptive the expectation is that you understand your device, your deployment context, and your risk profile, and that you manage security in a way that’s proportionate to that. The obligations sit inside the General Safety and Performance Requirements GSPR 17.2 (state-of-the-art lifecycle, including information security) and GSPR 17.4 (minimum IT and security requirements for safe operation). The primary cybersecurity guidance document, MDCG 2019-16, calls for “timely” security patch updates. That’s it. No days, no severity tiers, no mandatory cadence.

What the EU MDR does have is a QMS requirement. Whatever you document in that quality management system becomes the standard you’re audited against. If your procedures say critical vulnerabilities will be patched within 14 days, 14 days is now your obligation. Miss it even once, even for a well-documented reason, and you have a non-conformity.

A manufacturer who wrote an ambitious patch policy to look credible during conformity assessment has created a much harder problem for their postmarket team. We’ve seen this. It’s an avoidable problem.

FDA Section 524B: two tiers, your numbers

The US framework under Section 524B creates two explicit tiers:

- Known unacceptable vulnerabilities: address on a “reasonably justified regular cycle”

- Critical vulnerabilities causing uncontrolled risk: address “as soon as possible out of cycle”

Neither tier contains a specific number of days. What Section 524B requires is that you state your timelines in the premarket submission, and those stated timelines become part of the basis on which the FDA assesses “reasonable assurance of cybersecurity.” FDA’s 2025 updated final guidance also addresses expectations around vulnerability communication and coordinated response timelines, though the specific requirements depend on device type and context, and manufacturers should refer directly to the current guidance for their situation.

The pattern we see: manufacturers state timelines that sound rigorous in a submission document but don’t reflect the realities of clinical deployment. What gets written in the submission is what gets measured postmarket. Those aren’t the same thing, and the gap between them is where findings come from.

DTAC: not a single timeline, but a framework that bites

DTAC doesn’t prescribe patch timelines either. What it requires is evidence of a credible, maintained security posture, including Cyber Essentials certification, penetration testing evidence, and alignment with the DSIT Software Security Code of Practice.

Cyber Essentials is where teams sometimes create their own problem. CE Plus carries implicit expectations for promptly addressing high-severity vulnerabilities, and a 14-day figure is quoted in various places and treated as the DTAC standard. It isn’t, or at least not universally. What “timely” means in practice under DTAC depends on the vulnerability, the device, the deployment environment, and, critically, what your documented policy actually says and justifies.

This is one of those areas where a short conversation before you write the policy saves a long and expensive conversation after you’ve committed to something you can’t keep. Pinning yourself to 14 days because you’ve seen that number somewhere, without thinking through whether your deployment reality actually supports it, is the kind of thing that generates findings. A defensible, risk-proportionate policy that reflects your actual environment is worth far more to an NHS trust than an impressive number you’ve already missed.

| The same mistake, across all three frameworks |

| A manufacturer committed to patching critical vulnerabilities within 14 days. A critical patch came in. In practice, their hospital deployments required full regression testing before any software update could go out, a clinical safety requirement, not a shortcut. Actual deployment took 45 days. Their policy said 14. Their patch log said 45. That mismatch, not their intentions and not even the patch itself, was the non-conformity. |

What recovery actually looks like

When a non-conformity arises from a notified body, an FDA reviewer, or an NHS assessor, the first thing to understand is that it isn’t necessarily fatal. But it does have to be handled correctly, and the window for doing that is tighter than most teams expect.

Findings are categorised, and the category determines your options.

Major findings represent a systemic issue with potential patient safety impact. Certification cannot proceed until they’re resolved. Response windows vary by notified body and framework, but they are typically tight with limited opportunity to iterate, which means you need to structure recovery from day one, not day thirty.

Minor findings must be corrected within defined timescales, but don’t block certification. What catches teams out is the consequences of a minor finding recurring in consecutive audits. If the root cause wasn’t genuinely addressed, repeat findings get upgraded. That’s how a manageable situation becomes a serious one.

Recovery in practice: four stages

1. Triage the finding accurately

Work out exactly what was cited and, more importantly, whether you have a documentation gap or a genuine security control gap. These are different problems with different timelines and different costs. A documentation gap where the security work was done but never evidenced is faster to close than a control gap, where the measure genuinely wasn’t in place. Confusing the two wastes time that you don’t have.

2. Reconstruct or build the evidence chain

For documentation gaps, work backwards through the threat model, mitigation, requirement, and verification chain to produce the evidence that should have been there from the start. For control gaps: implement the missing measure, verify it, and produce evidence of both.

3. Produce a credible corrective action plan

The reviewer needs to see not just that the specific gap has been closed, but that you’ve understood why it happened and changed something to stop it happening again. A structured corrective action with a genuine root cause analysis lands very differently from a one-line response. Reviewers who do this regularly can tell the difference immediately.

4. Build what prevents the next one

Closing the current NCR is necessary. It isn’t sufficient. The artefacts that get you through recovery include: a maintained SBOM, a release-tied evidence index, and a patch policy that reflects deployment reality. These are also the artefacts that mean your next audit goes differently.

We’ve run this process with companies that came to us after findings had already arrived. The lesson that comes up every time: the gap between security that exists in the product and security that exists in the documentation is almost always closable. But it takes structured, methodical work by someone who understands what the reviewer needs to see, not just what good security looks like.

What you build so you don’t go back

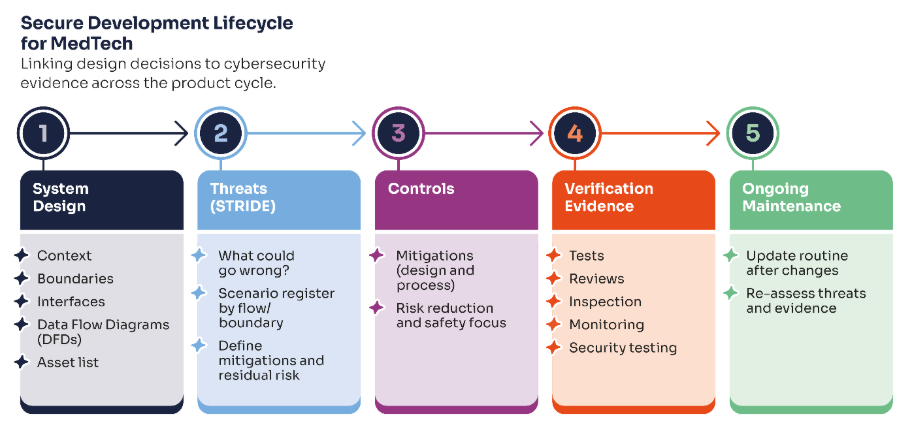

Episode 1 of this series introduced the Security Evidence Pack as the core structural answer. In the specific context of non-conformity prevention, four things matter most.

Your threat model needs to reflect your current product.

A threat model written for an earlier version of the product, or developed without reference to the actual architecture, gives reviewers nothing they can trace forward to the controls in the current release. Version it. Update it when the product changes. It’s a maintained document, not a one-time deliverable.

Your SBOM needs to be a workflow output, not a document.

Generated automatically at each release. Linked to a CVE monitoring workflow. Updated triage log showing decisions made on material vulnerabilities. This is what postmarket surveillance obligations under EU MDR and FDA Section 524B require in practice and it’s also what makes a security incident manageable rather than a crisis.

Your patch policy needs to reflect what you can actually deliver.

Define severity tiers. Map them to your deployment constraints. Include an exception process. Review it before you commit to it in a submission or QMS document, because once it’s written down, it’s the standard.

Your evidence needs to be release-tied.

A penetration test scoped to a system boundary that no longer reflects your current architecture, or a risk register last reviewed before your last major release, creates findings even when the underlying security is sound. Every artefact should clearly reference the product version it covers.

Free download: 10 Core Procurement Artefacts

This is the checklist we use to keep procurement and assurance work from becoming a last-minute scramble. It covers the ten artefacts most frequently requested across DTAC, EU MDR, and private procurement, including security architecture, threat model, SBOM and vulnerability monitoring, VDP/PSIRT route, patch policy, and incident response playbook, plus a suggested cadence for keeping them current.

Next step: Book a joint review

If you’ve received cybersecurity or clinical evidence-related findings and need to close them, or if you’re approaching an audit and want an honest view of where your evidence stands, book a joint review with Cyber Alchemy and Mantra Systems.

In 30 minutes we’ll:

- Assess where your current cybersecurity and clinical evidence stand against the framework you’re targeting

- Identify the gaps most likely to generate findings

- Give you a clear view of what to fix now, what to defer, and what a realistic recovery plan looks like

FAQs

What is a cybersecurity non-conformity under EU MDR?

A finding raised by a notified body indicating that your cybersecurity documentation or controls don’t meet Annex I GSPR requirements or, more commonly, that they don’t meet the commitments you made in your own QMS or technical documentation. Major findings must be resolved before certification can be issued or maintained.

Does EU MDR specify patch timelines?

No. MDCG 2019-16 requires “timely” updates which is the full extent of the prescription. The binding timelines are the ones you document in your QMS. Whatever you commit to becomes the standard you’re audited against.

Does FDA Section 524B specify patch timelines?

It specifies two tiers a “reasonably justified regular cycle” for known unacceptable vulnerabilities, and “as soon as possible out of cycle” for critical vulnerabilities causing uncontrolled risk, but neither contains a specific number of days. You state your own timelines in the premarket submission, and those become your obligation. FDA’s 2025 final guidance addresses vulnerability communication expectations in more detail, and manufacturers should refer to the current guidance for their specific device context.

Does DTAC require specific patch timelines?

DTAC itself doesn’t prescribe timelines. Cyber Essentials, which DTAC requires, carries implicit patching expectations, but specific figures sometimes quoted are not universal standards and need to be interpreted against your device type, deployment environment, and what your documented policy says. Getting this right before committing to it in writing matters.

Can cybersecurity nonconformities be recovered from?

Yes, but the recovery has a specific shape, and the window is typically tight. The key is understanding whether the gap is a documentation problem or a genuine control gap, and producing a corrective action that addresses the root cause rather than just the specific finding.

What’s the most common root cause of cybersecurity NCRs?

In our experience, there is a gap between the security that exists in the product and the security that exists in the documentation. The evidence chain from threat → control → verification → release-tied evidence is the thing most commonly missing and the thing reviewers most consistently look for.

The best time to build your cybersecurity evidence pack was before submission. The second-best time is before the finding lands.

About the authors

Neil Richardson, Co-Founder, Cyber Alchemy

Neil co-founded Cyber Alchemy and has over 15 years of experience in cyber security consultancy, technical assurance, and strategic advisory. Following the successful exit of his previous cybersecurity company in 2022, he now focuses on vCISO support, security strategy, and helping organisations take a pragmatic, business-aligned approach to cyber risk.

His background includes penetration testing, incident response, ISO 27001, and security consultancy across a wide range of sectors, including Medical Technology and Financial Technology. Neil also co-founded SteelCon, the ethical hacking conference, in 2012 and brings experience from the university sector, alongside a long track record of helping organisations improve resilience and security maturity.

Luke Hill, Senior Security Consultant, Cyber Alchemy

Luke brings deep expertise in security consultancy, penetration testing and regulatory-aligned security for the Health and Social Care sector. He leads Cyber Alchemy’s technical and regulatory efforts in the MedTech space, supporting a broad range of MedTech companies in building resilient devices and applications that comply with complex UK, US, and EU regulations.

Related: Episode 1 — EU MDR & NHS DTAC Cybersecurity Requirements for UK Market Entry

Related case study: See how Cyber Alchemy supported Adaptix